Necessary Always Active

Necessary cookies are required to enable the basic features of this site, such as providing secure log-in or adjusting your consent preferences. These cookies do not store any personally identifiable data.

|

||||||

|

||||||

|

||||||

|

A recent discovery has revealed that individual chats with ChatGPT have been exposed publicly in Google search results. According to TechCrunch, ChatGPT included a feature that let users generate a shareable URL of their conversation with the chatbot to send to friends or colleagues.

However, reports revealed that some of these shared chats were appearing in search engine results on platforms like Google, Bing, and DuckDuckGo. These conversations could be found by filtering results to a specific ChatGPT domain. OpenAI clarified that this was part of an experimental feature, which has since been turned off.

Reports emerged that ChatGPT chats that got indexed by Google search results were displaying private conversations that users had shared through the platform’s sharing feature. These conversations, which users may have intended for limited sharing, became discoverable by anyone conducting relevant searches on Google.

Fast Company was the first to report on Wednesday that some ChatGPT conversations were showing up in Google search results. The issue gained more attention after Luiza Jarovsky, a newsletter writer, posted on X, on Thursday, saying that private chats shared through ChatGPT’s link feature were being made public. She pointed out that using this feature could allow those conversations to be indexed by Google.

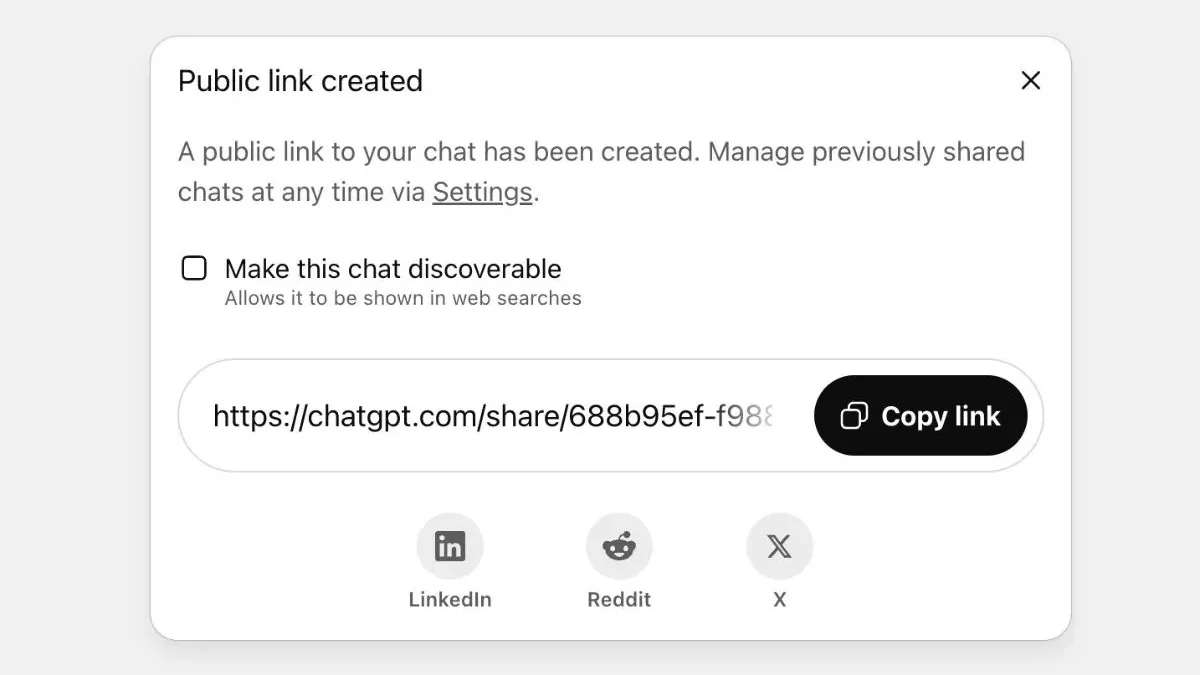

The feature asked users to take specific steps to share their chats, such as checking a box to “make this chat discoverable,” with a note that it could appear in web searches. While the shared chats were anonymized to lower the chance of i personally identifying users, they were still accessible online.

Addressing users privacy concerns, OpenAI issued a statement explaining that the indexed conversations were part of an experimental feature. OpenAI emphasized that only conversations explicitly shared by users through the platform’s sharing mechanism were affected by this issue.

The company clarified that private conversations that were not shared remained secure and were not indexed by search engines. However, this explanation did little to ease concerns among users who felt that the sharing feature’s implications were not clearly communicated.

Dane Stuckey, OpenAI’s Chief Information Security Officer, announced via social media post on Thursday, “We just removed a feature from @ChatGPTapp that allowed users to make their conversations discoverable by search engines, such as Google.” He also added, “Ultimately we think this feature introduced too many opportunities for folks to accidentally share things they didn’t intend to, so we’re removing the option. We’re also working to remove indexed content from the relevant search engines. This change is rolling out to all users through tomorrow morning.”

The incident involving shared chats found online has significant implications for user trust in AI platforms. Many users rely on these systems for sensitive conversations, assuming that their interactions remain private unless explicitly made public.

This privacy breach has led some users to reconsider how they interact with ChatGPT and similar AI systems. The incident serves as a reminder that users need to be extremely careful about what information they share, even when using features that appear to offer limited sharing options.

The long-term impact on ChatGPT’s user base remains to be seen, but the incident has certainly highlighted the need for clearer privacy policies and better user education about data sharing implications.