Top 20 Data Science Tools – The Ultimate Guide

Introduction

In today’s technology-based world, both Artificial Intelligence and Machine Learning have immensely expanded among various business organizations just in the same way as software development has occurred. With the assistance of Data Science tools and frameworks, we can easily handle huge amounts of datasets, gain necessary information from those and decide which algorithm is best suited for which model.

Noting that Machine learning in the field of Data Science has become the hottest job of the 21st century and providing plenty of opportunities to both experienced developers and fresh IT graduates, all programmers are trying to learn and update themselves with the latest programming and coding stuff.

In this particular blog, we have shortlisted the top 20 Data Science Tools which are available so that we can build and develop much better model solutions for our software.

This blog will turn out to be an ultimate guide for increasing your knowledge and help you explore the world of Data Science, as the rundown of machine learning tools is only the beginning and will keep on being refreshed. Along these lines, without much delay we should start:

Contents

1. TensorFlow:

It is considered to be one of the outstanding Machine Learning Framework amongst other open-source libraries, that is uniquely created for information-based programming. This is so because it helps to deal with a huge range of datasets and it supports regression, classification, and convoluted calculations.

This machine learning tool can function admirably with CPUs and GPUs. It has various forms just as models which provide us the best to deal with a lot of information. It can run well on each gadget, so it is versatile and utilized for improvement reasons. The only thing to observe here is, we need to give additional exertion in learning TensorFlow as it is very intricate.

2. SCIKIT-LEARN:

Scikit-Learn is perhaps the most helpful library for ML in Python. Based on NumPy, SciPy, and Matplotlib, this Python-based library contains a variety of proficient devices for Machine Learning and measurable displaying. These incorporate grouping, relapse, bunching and dimensionality decrease, model choice, and pre-handling.

Since it’s an open-source library with a functioning local area, it is continually being improved. Scikit-Learn can be implemented at various assignments with no penance in speed. It’s a perfect API and is strongly suggested for information mining issues. On the off chance if you are looking for assembling models, then this is the best structure in the business.

3. CAFFE:

A machine learning structure that is created with the assistance of CPP is what we know as Convolutional Architecture for Fast Feature Embedding. This structure is known for its quicker preparation of profound neural organizations. CAFFE comes along with the Python and MatLab interface which makes the capacities work quicker.

It can handle a 60M picture in a solitary day with its processor. It even empowers us to switch between GPU and CPU with a solitary banner. There is consistently a discussion going on about R versus Python for Machine Learning arrangements. In any case, Python has gained more trust from the developers rather than R.

4. AMAZON MACHINE LEARNING (AML):

A cloud-based, Machine Learning programming application, principally utilized by designers everywhere in the world to construct AI models and for producing forecasts is what Amazon Machine Learning means for us. The best part about it is that it can be used by all developers, maybe a beginner or an expert and works well on both desktop and Android platforms.

AML upholds three kinds of ML models which include binary classification, multi-class classification, and also regression. It can incorporate information from various sources like Amazon S3, RDS, and Redshift. Likewise, it permits us to make information source objects from the MySQL data set.

5. MICROSOFT CNTK:

5. MICROSOFT CNTK:

Microsoft Cognitive Toolkit is a profound learning tool compartment given by Microsoft. This system is worked to comprehend the calculation for the natural human mind. It functions admirably with Machine Learning and Artificial Intelligence. We can even utilize the AI system to make models for our business. Microsoft Cognitive Toolkit can even be redone according to our necessities.

We can easily pick our organizations, measurements, calculations, and the sky’s the limit from there. It even empowers backing to multi-machine-multi-GPU-back-closes. This one is an extraordinary AI system in the event that you need your own customization model. Furthermore, observe a point that this device turns out impeccably for the calculations worked for the human cerebrum.

6. APACHE SPARK MLib:

Apache Spark MLib is an adaptable ML library that causes sudden spikes in demand for Apache Mesos, Hadoop, Kubernetes, either independent or in the cloud. It comprises all the standard ML calculations and utilities like characterization, relapse, grouping, synergistic sifting, dimensionality decrease.

The principle point of this apparatus is to make viable Machine Learning versatile and simple. Flash MLlib offers different instruments like ML calculations, Featurization (include extraction, change, dimensionality decrease, and choice), Pipelines (for building, assessing, and tuning ML pipelines), Persistence (for saving and stacking calculations, models, and pipelines), and Utilities (for straight polynomial math, insights, information dealing with).

7. SHOGUN:

Shogun is perhaps the most renowned C++-based ML structure on the market trending today. C++ is an exceptionally huge and advanced programming language. In order to create a platform in Machine Learning using C++, it requires a huge amount of in-depth understanding and knowledge. Despite the fact that the language is C++, it’s an interface for a few dialects and stages like MATLAB, Python, R octave, JAVA, and so on. It is well known for the scope of ML models it offers.

Its models range from SVM to Markov’s model and so on. Likewise, because of its wide contribution of models with a solid language base like C++, it is profoundly research-situated. Numerous IT ventures which are chipping away at ML projects widely used shogun. Shogun gives models and calculations to essentially a wide range of learning strategies in ML and to a degree, it helps in neural organizations too. In the shogun structure, we would get libraries like CLIBSVM, and so on. Each name will have a capital C before it.

8. MOA:

Massive Online Analysis, abbreviated as MOA is the most well-known open-source structure for information stream mining, with a functioning, developing, and growing community. It’s anything but an assortment of AI calculations (characterization, relapse, grouping, anomaly discovery, idea float identification, and recommender frameworks) and apparatuses for assessment. Identified with the WEKA project, MOA is however written in Java, while scaling to additional requesting issues.

9. VELES:

9. VELES:

Veles is an appropriate stage for deep learning applications, and it’s written entirely in C++, in spite of the fact that it utilizes Python to perform mechanization and coordination between hubs. Datasets can be examined and naturally standardized prior to being taken care of to the bunch, and a REST API permits the prepared model to be utilized underway right away. It centers around execution and adaptability. It has minimal hard-coded elements and empowers the preparation of the relative multitude of broadly perceived geographies, like completely associated nets, convolutional nets, recurrent nets, and so on.

10. APACHE MAHOUT:

Apache Mahout is an open-source Machine Learning zeroed in on cooperative sifting just like a grouping. These executions are an expansion of the Apache Hadoop Platform. While it is as yet in progress, the quantity of calculations that are upheld by it has been developing altogether. Since it is carried out on top of Hadoop, it utilizes the Map/Reduce ideal models.

A portion of the special highlights of Apache Mahout is It gives expressive Scala DSL and a disseminated straight polynomial math system for profound learning calculations. It gives local solvers to CPUs, GPUs just as CUDA gas pedals.

11. ORYX 2:

Oryx 2 utilizes Lambda Architecture for constant and enormous scope AI preparation. This model was based on top of the Apache Spark design that includes bundled capacities for building fast prototyping and applications. It works with start to finish model advancement for communitarian separating, characterization, relapse just as grouping tasks.

Oryx 2 includes the accompanying three levels. The main level is a conventional lambda level that gives speed and serving layers that are not explicit to Machine Learning techniques. The subsequent specialization gives ML reflections to choose the hyperparameters. It’s a well-prepared start to finish execution of the ML applications in its third level.

12. APPLE’S CORE ML:

Core ML is an information science programming instrument by Apple. It’s anything but a direct model that assists clients with coordinating AI models into their portable applications. The straightforward cycle expects clients to drop the machine learning model record into their undertaking and the Xcode naturally fabricates a Swift covering class or Objective-C.

Area explicit systems and usefulness can be found on it. Core ML effectively upholds Computer Vision for exact picture investigation, GameplayKit for learned choice trees assessment, and Natural Language for normal language handling. It is streamlined for on-gadget execution and also expands on top of low-level natives.

13. THEANO:

Theano is a Python library that lets us characterize, upgrade, and assess numerical articulations, particularly ones with multi-dimensional clusters (NumPy.ndarray). Utilizing Theano it is feasible to achieve speeds equaling hand-made C executions for issues including a lot of information. It was composed at the LISA lab to help the fast improvement of proficient AI calculations.

We all have heard about Pythagoras Theorem and have also implemented it in many fields. The name, Theano comes after Pythagoras’ wife who was also a great greek mathematician and is delivered under a BSD permit.

14. WEKA:

Weka empowers AI calculations that assist in information mining. The major features are Classification, Visualization, Data readiness, Regression, Data mining, and clustering too. Moreover, it gives online courses to prepare straightforward calculations and is quite useful for students also.

Regression is a method that is used to find the relationship between a dependent variable ‘y’ and a sequence of independent variables and its representation on straight lines. We won’t be discussing Simple Linear Regression in this blog because it’s a vast topic for study but I would suggest you to grow an in-depth understanding of this subject matter as it will help a lot in further prospects.

15. COLAB:

The best cloud-based administration supported by Python is what Google Colab is. It uses PyTorch, Keras, TensorFlow, and OpenCV libraries to help us assemble AI applications. The major highlights are It aids AI schooling and exploration and we can explore it easily by means of our google drive.

16. NET:

Accord.Net offers Machine Learning libraries for man-made intelligence picture and sound preparation. Its features include it gives calculations to Numerical Linear Algebra, Numerical enhancement, Statistics, and Artificial Neural organizations and also for a picture, sound, and sign handling. It likewise offers help for chart plotting and representation libraries. The major advantage is that libraries are made accessible from the source code and just as an executable installer and NuGet bundle supervisor.

17. RAPID MINER:

Rapid Miner is a stage for AI, profound learning, text mining, information arrangement, and prescient examination. Exploration, training, and application advancement are the sectors in which rapid miner is being implemented in the maximum amount.

The highlights are through GUI, which helps in organizing and executing methodical insightful work processes. It helps with information arrangement, result representation, and model endorsement, and improvement. It has turned out to be so beneficial for developers because it is widely extensible through modules, easy to utilize and restricted programming abilities are required.

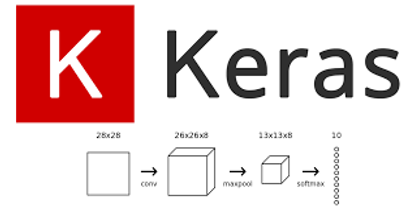

18. KERAS:

Keras is an open-source neural organization library that offers help for Python. It is mainstream for its seclusion, speed, and usability. Consequently, Keras is highly advantageous in cases such as quick experimentation as well as fast prototyping. It offers help for the execution of Convolutional Neural Networks, Recurrent Neural Networks, or just as both.

It can run efficiently and consistently on both the CPU and GPU. Contrasted with all the more broadly famous libraries like TensorFlow and Pytorch, Keras gives ease of use that permits the clients to promptly carry out neural organizations without abiding over the specialized language.

19. MLPACK:

mlpack is a C++based AI library initially carried out in 2011 and intended for versatility, speed, and convenience, as per the library’s makers. Carrying out mlpack should be possible through a store of order line executables for no-nonsense, discovery activities, or with a C++ API for more refined work. Mlpack gives these calculations as basic order line projects and C++ classes which would then be able to be incorporated into bigger scope AI arrangements.

20. PATTERN:

It is a web digging module for the Python programming language. It has devices for information mining (Google, Twitter, and Wikipedia API, a web crawler, an HTML DOM parser), regular language preparing (grammatical feature taggers, n-gram search, assessment examination, WordNet), AI (vector space model, bunching, SVM), network investigation and <canvas> representation.

Author Bio:

Ram Tavva a Senior Data Scientist and Alumnus of IIM- C (Indian Institute of Management – Kolkata) with over 25 years of professional experience Specialized in Data Science, Artificial Intelligence, and Machine Learning.